Eval Criteria # 23

How does the system's API support the model?

Occasionally (or way too often, depending on the system) the content modeling features of a CMS come up short. In these cases, you can sometimes fall back on the system’s API and use it to “code around” the shortcomings.

Our ability to do this depends on whether or not you can write code against the CMS at all (many SaaS platforms have no option for this), and to what extent. APIs vary greatly in the access and competence they provide.

Event-Based Programming

Many CMSs have an event model (sometimes called hooks) by which you can attach code execution to specific events which take place inside the CMS. Some common events might be:

- Content created

- Content saved

- Content published

- Content deleted

These events usually mirror the basic editorial lifecycle of the system, and will have more or fewer events depending on specific features offered by the CMS. There might be “check in” or “archived” events, or “move” events in tree-based systems.

To “hook,” “handle,” or “subscribe to” an event is to supply programming code which executes when any of these events occur. In most cases, you can insert your code just before the event occurs, or just after it has occurred. Executing code before an event usually offers the ability to change the data the event is working with.

For example, if you want to ensure that no article ever contains profanity, you could subscribe to the event which occurs immediately prior to content being saved back to the repository, and inspect the attribute values of the object being saved, changing them when necessary, and ensuring that the object saved back to the repository meets your standard.

Before and after events are usually differentiated by name or tense. The event prior to content being saved might be “Content Saving” or “On Before Content Saved.” The event after content being saved might be “Content Saved” or “On After Content Saved.” For the after event, clearly you can’t change any of the parameters that the event is operating on (it has already occurred, remember), but you can execute code that uses the content to affect other systems.

For example, you might want to manage your organization’s tweets in your CMS. You could create a Tweet type which is not URL-addressable. Then, in the “Content Published” event, you execute code to connect to the Twitter API and post the tweet. In this situation, you gain all the benefits of managed content – versioning, auditing, permissions, etc. – but your publishing target is an external system.

Another example: let’s say your CMS has no category system, so you decide to rig one up via a custom content type. You create a Category type, with a repeating, referential attribute for Content. You can edit a category and assign content to it by adding references to the Content attribute value. Using this method, it’s easy for your category to list all the content assigned to it.

However, in your system, referential attributes are uni-directional. So your category is linked to content, but the content has no idea to what categories it is assigned.

You might solve this by creating an inherited, referential, repeating Categories attribute on all content objects that cannot be edited. Then, you could attach code to the Content Saved event. Whenever your category is saved, it could compare the version of the category being saved with the prior version to determine the differences, then update the Categories attribute on all affected content to add or remove the saved category reference.

Webhooks

Many systems – especially SaaS systems – provide the ability to configure webhooks, which send a specially-formatted HTTP request to a specified URL when certain content events occur, such as when content is saved. The affected content is passed along with the HTTP request, and code on the other end (in an environment not controlled by the CMS) can take action on it.

Webhooks can be helpful, but they are usually post-event only, meaning they can be used to notify an external system that an event has occurred, but rarely do they contact the external system while an event is pending and incorporate any return value.

Some webhook systems send entire content objects with the request. So, when content is saved, a webhook might send an HTTP request to another system with a serialized representation of the entire content object being saved (in the request body, via a POST request). Other systems might send less information – such as just the ID of the affected content object – and require the system to “reach back” to the CMS to get more information when necessary.

Event Programming and Versioning

When model hacking, there are two seemingly small API features that can be very helpful.

Sometimes you’ll want to modify content every time it’s saved. When doing this, it’s beneficial to save into the current version of an object

Most CMSs are designed to version content, so they save a new version of content alongside the existing version. However, when updating from the API, you might create an excess of versions, which consumes storage, taxes the system, and makes it difficult for editors to isolate changes.

With some systems, you have the ability to not create a new version when saving from the API. Clearly, this might cause governance problems if used indiscriminately, but is helpful to limit extraneous versions and all the drawbacks that result from them.

Closely related to this is the ability to perform events “silently,” meaning to selectively not invoke event handling code in response to events. This is helpful when writing event-based code.

When responding to an event, you might incidentally re-invoke the event to which the code just responded. This can form an infinite loop of events – saving content executes some code which also saves the content, which then executes the same code, ad infinitum. In addition to never finishing, this might also proliferate new versions as fast as the system could generate them.

To avoid this, some systems will allow you to perform events from code with the additional instruction that this event should not invoke other events. The event you are triggering can be executed as an exception to the event handling model.

Like saving into the current version, short-circuiting the event handlers might have some unintended consequences. It needs to carefully considered, but there are many model hacking scenarios where it’s critical to writing manageable code.

Supplemental Indexing

When searching for content, sometimes it’s easier to go around the content model completely, and simply create a supplemental index. This means writing content into a storage system outside the CMS repository in a format that’s optimized for its intended use.

To continue the categorization example from above, let’s say you’ve found a way to make your references bi-directional, but you might still run into synchronization problem, or have problems with finding descendant assignments. Rather than trying to keep your content model continually updated, you might just decide to go around it completely and store a representation of the category tree somewhere else.

XML is designed for modeling hierarchical structures. It has a query language designed specifically for this (XPath) and most programming languages have XML support built-in.

You could execute code on content publishing and simply write a file to the server with a complete representation of your category tree and all its assignments in an XML format designed for easy querying. In your template or delivery context, you might just read directly from this XML file rather than the repository.

And instead of keeping this file incrementally updated, you might just delete it entirely and rewrite it each time – depending on the system and hardware on which it runs, the operation might take just a few seconds, and you could likely execute your code in a separate process so the UI doesn’t wait for it to finish.

And therein lies the elegance of a supplement index – it’s disposable. By definition, the content in the repository is the “true” content, and your index is just a representation of it designed specifically to allow easy and efficient searching. At any time, you can confidently delete the index and recreate it from scratch.

Supplemental indexes are extremely common in one aspect of CMS: full-text search. Very rarely does a full-text search access the repository directly, because full-text search requires content in a specific format to run efficiently. In most cases, when content is changed, the content is deleted from and re-inserted in an optimized indexing system such as Lucene, and this is what’s accessed when doing a full text search.

Repository Abstraction

Repository abstraction can help by removing the need to model content at all. In some cases, a CMS’s API can allow you to leave content in an external repository, and just bring it into the CMS in real-time to work with it there.

Repository abstraction swaps out the retrieval location for content. The vast majority of content will be retrieved from the CMS repository itself, but selected content might be retrieved from another storage location then converted to content objects on-the-fly for use in delivery contexts.

For instance, if your university has an extensive database of faculty members, classes, and class assignments, it would be considerable work to re-enter all this information in your CMS and keep it updated. Using repository abstraction, you might be able to make models of just the types and attributes you need, then configure your CMS to dynamically populate objects of those types directly from the external database when content is requested.

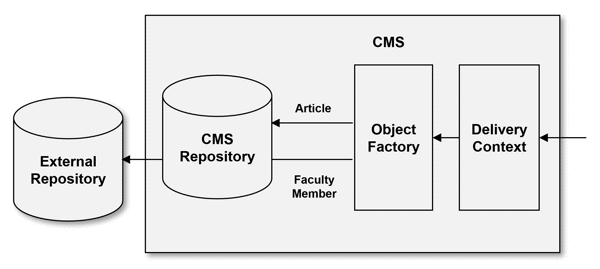

An example of repository abstraction. Starting on the right, an inbound request initiates a delivery context. To form the required content payload, the delivery context requests objects from a “object factory” to determine which repository the data for the object(s) resides.

For Article objects (and, likely, most all objects), the factory will retrieve data from the local repository. However, for Faculty Member objects, the factory will reach “out” of the CMS to an external datasource for the data.

Either way, the delivery context (and, certainly, the incoming request itself) has no idea where the content was ultimately sourced from. The delivery context is “abstracted” away from the repository, via the object factory.

Content Synchronization

Repository abstraction can be resource- and network-intensive. It requires fast, stable access to the external repository, since the CMS depends on it to fulfill active requests. However, sometimes this isn’t possible or advisable. Perhaps your faculty database is on your internal university network, and you don’t want to allow a constant connection to your public website.

In these cases, it might be better use content synchronization to automatically duplicate content from your external repository into your CMS.

In the early hours of every morning, a scheduled job might execute in the CMS and synchronize records from the faculty database with content in the repository. This job (1) creates new content objects when necessary, (2) updates existing content objects, and (3) deletes content objects which are no longer represented (a process sometimes called Extract-Transform-Load or ETL).

The resulting objects might be read-only in your CMS, or might have a set of read-only attributes if they’re only partially updated from the external source. The resulting objects are sometimes called proxy objects, as they’re simply representations or “proxies” of data stored elsewhere.

Content synchronization inevitably brings up issues of velocity and latency.

- Content velocity refers to how often content changes. “High velocity content” changes very frequently (i.e., a stock price). “Low velocity content” changes rarely (i.e., the privacy policy)

- Content latency is your need for immediacy in reflected changes. If you require low-latency, then you need content changes to show in or near real-time. If high-latency is okay, then you’re less concerned about seeing changes immediately – eventually is fine.

Every decision about repository abstraction or content synchronization needs to be evaluated against the content’s velocity and your tolerance for latency.

Content Model Reflection

Less common, but occasionally helpful, is the ability to access the content type and attribute structure from code. When programming code is aware of itself, then it can introspect and answer questions about itself. This is called reflection, and the same principle works with content models.

It’s helpful to be able to reflect a content model, particularly for documentation. I’ve been in situations where the best documentation of the content model was code that looped through all the types, all their attributes, all of their attributes settings, their help text, and all the associated validation rules, and then output a master document which served as the ultimate and final representation of the content structure.

Given that the document was generated directly from the repository in real-time, it was guaranteed to be accurate and up-to-date.

Some templating code might benefit from this as well. Say you have several content types – Article, Case Study, Analysis Report, etc. – all possessing an attribute. This attribute should be rendered into an author’s byline, and you would like to do this in a shared header template, regardless of type. Your template code could query the type definition of the operative content object to determine whether it is one with an attribute, and use that information to show or hide the byline content. You could thereby use the same header template for all content, and trust that it would adapt correctly to the type of content is was rendering.

Recursive Content Models

Occasionally, a system will have a recursive content model which means the model itself can be modeled – the model is defined in content.

An attribute might itself be a content object representation of an Attribute type. So, the entire model definition is made out of content objects. This means that you might be able to add an attribute to an attribute.

For example, if you wanted to have a supplemental search index, it would be helpful to indicate which attributes on a type should be added to the index. To do this, you might add a “meta-attribute” called Indexable to the Attribute content type on which all other attribute types are based. When assigning that attribute type to a content type, this meta-attribute would effectively become a setting that governs that particular attribute assignment.

This gets complex and abstract but some systems have decided that a content model is, in itself, also content. So why not manage it the same way as other content?

It’s easy to fall down a rabbit hole by patching holes in your content model with API code. Ideally, a system natively supports everything you might want to do with your content. However, in the real world, it’s very helpful to have options when this isn’t otherwise possible. A well-architected API and comprehensive event model can get you out of some sticky situations.

Evaluation Questions

- What is the event model of the system? Can events be captured in code? Are their prior- and post-event handlers?

- Are webhooks an explicit concept in the system?

- Can content be saved into the current version, without creating a new version?

- Can the event model be explicitly bypassed? Meaning, can an action take place that actively suppresses events that would normally occur?

- Is there any built-in support for repository abstraction?

- Are there any features design to support content synchronization against an external source?

- Can the content model be reflected or introspected from the API?

- Is the content model recursive? Is the model itself modeled?